Active learning (machine learning)

| Machine learning and data mining |

|---|

|

Active learning is a special case of machine learning in which a learning algorithm can interactively query a human user (or some other information source), to label new data points with the desired outputs. The human user must possess knowledge/expertise in the problem domain, including the ability to consult/research authoritative sources when necessary. [1][2][3] In statistics literature, it is sometimes also called optimal experimental design.[4] The information source is also called teacher or oracle.

There are situations in which unlabeled data is abundant but manual labeling is expensive. In such a scenario, learning algorithms can actively query the user/teacher for labels. This type of iterative supervised learning is called active learning. Since the learner chooses the examples, the number of examples to learn a concept can often be much lower than the number required in normal supervised learning. With this approach, there is a risk that the algorithm is overwhelmed by uninformative examples. Recent developments are dedicated to multi-label active learning,[5] hybrid active learning[6] and active learning in a single-pass (on-line) context,[7] combining concepts from the field of machine learning (e.g. conflict and ignorance) with adaptive, incremental learning policies in the field of online machine learning. Using active learning allows for faster development of a machine learning algorithm, when comparative updates would require a quantum or super computer.[8]

Large-scale active learning projects may benefit from crowdsourcing frameworks such as Amazon Mechanical Turk that include many humans in the active learning loop.

Definitions

Let T be the total set of all data under consideration. For example, in a protein engineering problem, T would include all proteins that are known to have a certain interesting activity and all additional proteins that one might want to test for that activity.

During each iteration, i, T is broken up into three subsets

- : Data points where the label is known.

- : Data points where the label is unknown.

- : A subset of TU,i that is chosen to be labeled.

Most of the current research in active learning involves the best method to choose the data points for TC,i.

Scenarios

- Pool-Based Sampling: In this approach, which is the most well known scenario [9], the learning algorithm attempts to evaluate the entire dataset before selecting data points (instances) for labeling. It is often initially trained on a fully labeled subset of the data using a machine-learning method such as logistic regression or SVM that yields class-membership probabilities for individual data instances. The candidate instances are those for which the prediction is most ambiguous.instances are drawn from the entire data pool and assigned a confidence score, a measurement of how well the learner "understands" the data. The system then selects the instances for which it is the least confident and queries the teacher for the labels.

The theoretical drawback of pool-based samplilng is that it is memory-intensive and is therefore limited in its capacity to handle enormous datasets, but in practice, the rate-limiting factor is that the teacher is typically a (fatiguable) human expert who must be paid for their effort, rather than computer memory. - Stream-Based Selective Sampling: Here, each consective unlabeled dinstance is examined one at a time with the machine evaluating the informativeness of each item against its query parameters. The learner decides for itself whether to assign a label or query the teacher for each datapoint. As contrasted with Pool-based sampling, the obvious drawback of stream-based methods is that the learning algorithm does not have sufficient information, early in the process, to make a sound assign-label-vs ask-teacher decision, and it does not capitalize as efficiently on the presence of already labeled data. Therefore, the teacher is likely to spend more effort in supplying labels than with the pool-based approach.

- Membership Query Synthesis: This is where the learner generates synthetic data from an underlying natural distribution. For example, if the dataset are pictures of humans and animals, the learner could send a clipped image of a leg to the teacher and query if this appendage belongs to an animal or human. This is particularly useful if the dataset is small.[10].

The challenge here, as with all synthetic-data-generation efforts, is in ensuring that the synthetic data is consistent in terms of meeting the constraints on real data. As the number of variables/features in the input data increase, and strong dependencies between variables exist, it becomes increasingly difficult to generate synthetic data with sufficient fidelity.

For example, to create a synthetic data set for human laboratory-test values, the sum of the various white blood cell (WBC) components in a White Blood Cell differential must equal 100, since the component numbers are really percentages. Similarly, the enzymes Alanine Transaminase (ALT) and Aspartate Transaminase (AST) measure liver function (though AST is also produced by other tissues, e.g., lung, pancreas) A synthetic data point with AST at the lower limit of normal range (8-33 Units/L) with an ALT several times above normal range (4-35 Units/L) in a simulated chronically ill patient would be physiologically impossible.

Query strategies

Algorithms for determining which data points should be labeled can be organized into a number of different categories, based upon their purpose:[1]

- Balance exploration and exploitation: the choice of examples to label is seen as a dilemma between the exploration and the exploitation over the data space representation. This strategy manages this compromise by modelling the active learning problem as a contextual bandit problem. For example, Bouneffouf et al.[11] propose a sequential algorithm named Active Thompson Sampling (ATS), which, in each round, assigns a sampling distribution on the pool, samples one point from this distribution, and queries the oracle for this sample point label.

- Expected model change: label those points that would most change the current model.

- Expected error reduction: label those points that would most reduce the model's generalization error.

- Exponentiated Gradient Exploration for Active Learning:[12] In this paper, the author proposes a sequential algorithm named exponentiated gradient (EG)-active that can improve any active learning algorithm by an optimal random exploration.

- Random Sampling: a sample is randomly selected.[13]

- Uncertainty sampling: label those points for which the current model is least certain as to what the correct output should be.

- Entropy Sampling: The entropy formula is used on each sample, and the sample with the highest entropy is considered to be the least certain.[13]

- Margin Sampling: The sample with the smallest difference between the two highest class probabilities is considered to be the most uncertain.[13]

- Least Confident Sampling: The sample with the smallest best probability is considered to be the most uncertain.[13]

- Query by committee: a variety of models are trained on the current labeled data, and vote on the output for unlabeled data; label those points for which the "committee" disagrees the most

- Querying from diverse subspaces or partitions:[14] When the underlying model is a forest of trees, the leaf nodes might represent (overlapping) partitions of the original feature space. This offers the possibility of selecting instances from non-overlapping or minimally overlapping partitions for labeling.

- Variance reduction: label those points that would minimize output variance, which is one of the components of error.

- Conformal prediction: predicts that a new data point will have a label similar to old data points in some specified way and degree of the similarity within the old examples is used to estimate the confidence in the prediction.[15]

- Mismatch-first farthest-traversal: The primary selection criterion is the prediction mismatch between the current model and nearest-neighbour prediction. It targets on wrongly predicted data points. The second selection criterion is the distance to previously selected data, the farthest first. It aims at optimizing the diversity of selected data.[16]

- User Centered Labeling Strategies: Learning is accomplished by applying dimensionality reduction to graphs and figures like scatter plots. Then the user is asked to label the compiled data (categorical, numerical, relevance scores, relation between two instances.[17]

A wide variety of algorithms have been studied that fall into these categories.[1][4] While the traditional AL strategies can achieve remarkable performance, it is often challenging to predict in advance which strategy is the most suitable in aparticular situation. In recent years, meta-learning algorithms have been gaining in popularity. Some of them have been proposed to tackle the problem of learning AL strategies instead of relying on manually designed strategies. A benchmark which compares 'meta-learning approaches to active learning' to 'traditional heuristic-based Active Learning' may give intuitions if 'Learning active learning' is at the crossroads [18]

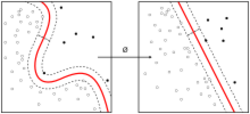

Minimum marginal hyperplane

Some active learning algorithms are built upon support-vector machines (SVMs) and exploit the structure of the SVM to determine which data points to label. Such methods usually calculate the margin, W, of each unlabeled datum in TU,i and treat W as an n-dimensional distance from that datum to the separating hyperplane.

Minimum Marginal Hyperplane methods assume that the data with the smallest W are those that the SVM is most uncertain about and therefore should be placed in TC,i to be labeled. Other similar methods, such as Maximum Marginal Hyperplane, choose data with the largest W. Tradeoff methods choose a mix of the smallest and largest Ws.

See also

Notes

- ↑ 1.0 1.1 1.2 Settles, Burr (2010). "Active Learning Literature Survey". University of Wisconsin–Madison. http://pages.cs.wisc.edu/~bsettles/pub/settles.activelearning.pdf.

- ↑ Rubens, Neil; Elahi, Mehdi; Sugiyama, Masashi; Kaplan, Dain (2016). "Active Learning in Recommender Systems". in Ricci, Francesco; Rokach, Lior; Shapira, Bracha. Recommender Systems Handbook (2 ed.). Springer US. doi:10.1007/978-1-4899-7637-6. ISBN 978-1-4899-7637-6. http://machinelearning202.pbworks.com/f/Rubens-Active-Learning-RecSysHB2010.pdf.

- ↑ Das, Shubhomoy; Wong, Weng-Keen; Dietterich, Thomas; Fern, Alan; Emmott, Andrew (2016). "Incorporating Expert Feedback into Active Anomaly Discovery". in Bonchi, Francesco; Domingo-Ferrer, Josep; Baeza-Yates, Ricardo et al.. IEEE 16th International Conference on Data Mining. IEEE. pp. 853–858. doi:10.1109/ICDM.2016.0102. ISBN 978-1-5090-5473-2.

- ↑ 4.0 4.1 Olsson, Fredrik (April 2009). "A literature survey of active machine learning in the context of natural language processing". http://eprints.sics.se/3600/.

- ↑ Yang, Bishan; Sun, Jian-Tao; Wang, Tengjiao; Chen, Zheng (2009). "Effective multi-label active learning for text classification". Proceedings of the 15th ACM SIGKDD international conference on Knowledge discovery and data mining - KDD '09. pp. 917. doi:10.1145/1557019.1557119. ISBN 978-1-60558-495-9. https://www.microsoft.com/en-us/research/wp-content/uploads/2009/01/sigkdd09-yang.pdf.

- ↑ Lughofer, Edwin (February 2012). "Hybrid active learning for reducing the annotation effort of operators in classification systems". Pattern Recognition 45 (2): 884–896. doi:10.1016/j.patcog.2011.08.009. Bibcode: 2012PatRe..45..884L.

- ↑ Lughofer, Edwin (2012). "Single-pass active learning with conflict and ignorance". Evolving Systems 3 (4): 251–271. doi:10.1007/s12530-012-9060-7.

- ↑ Novikov, Ivan (2021). "The MLIP package: moment tensor potentials with MPI and active learning". IOP Publishing 2 (2): 3,4. doi:10.1088/2632-2153/abc9fe. https://dx.doi.org/10.1088/2632-2153/abc9fe.

- ↑ DataRobot. "Active learning machine learning: What it is and how it works". DataRobot Inc.. https://www.datarobot.com/blog/active-learning-machine-learning.

- ↑ Wang, Liantao; Hu, Xuelei; Yuan, Bo; Lu, Jianfeng (2015-01-05). "Active learning via query synthesis and nearest neighbour search". Neurocomputing 147: 426–434. doi:10.1016/j.neucom.2014.06.042. http://espace.library.uq.edu.au/view/UQ:344582/UQ344582_OA.pdf.

- ↑ Bouneffouf, Djallel; Laroche, Romain; Urvoy, Tanguy; Féraud, Raphael; Allesiardo, Robin (2014). "Contextual Bandit for Active Learning: Active Thompson". in Loo, C. K.. Neural Information Processing. Lecture Notes in Computer Science. 8834. pp. 405–412. doi:10.1007/978-3-319-12637-1_51. HAL Id: hal-01069802. ISBN 978-3-319-12636-4. https://hal.archives-ouvertes.fr/hal-01069802.

- ↑ Bouneffouf, Djallel (8 January 2016). "Exponentiated Gradient Exploration for Active Learning". Computers 5 (1): 1. doi:10.3390/computers5010001.

- ↑ 13.0 13.1 13.2 13.3 Faria, Bruno; Perdigão, Dylan; Brás, Joana; Macedo, Luis (2022). The Joint Role of Batch Size and Query Strategy in Active Learning-Based Prediction - A Case Study in the Heart Attack Domain. Lecture Notes in Computer Science. 13566. 464–475. doi:10.1007/978-3-031-16474-3_38. ISBN 978-3-031-16473-6.

- ↑ "shubhomoydas/ad_examples" (in en). https://github.com/shubhomoydas/ad_examples#query-diversity-with-compact-descriptions.

- ↑ Makili, Lázaro Emílio; Sánchez, Jesús A. Vega; Dormido-Canto, Sebastián (2012-10-01). "Active Learning Using Conformal Predictors: Application to Image Classification". Fusion Science and Technology 62 (2): 347–355. doi:10.13182/FST12-A14626. ISSN 1536-1055.

- ↑ Zhao, Shuyang; Heittola, Toni; Virtanen, Tuomas (2020). "Active learning for sound event detection" (in en). IEEE/ACM Transactions on Audio, Speech, and Language Processing.

- ↑ Bernard, Jürgen; Zeppelzauer, Matthias; Lehmann, Markus; Müller, Martin; Sedlmair, Michael (June 2018). "Towards User-Centered Active Learning Algorithms". Computer Graphics Forum 37 (3): 121–132. doi:10.1111/cgf.13406. ISSN 0167-7055.

- ↑ Desreumaux, Louis; Lemaire, Vincent (2020). Learning Active Learning at the Crossroads? Evaluation and Discussion.

|